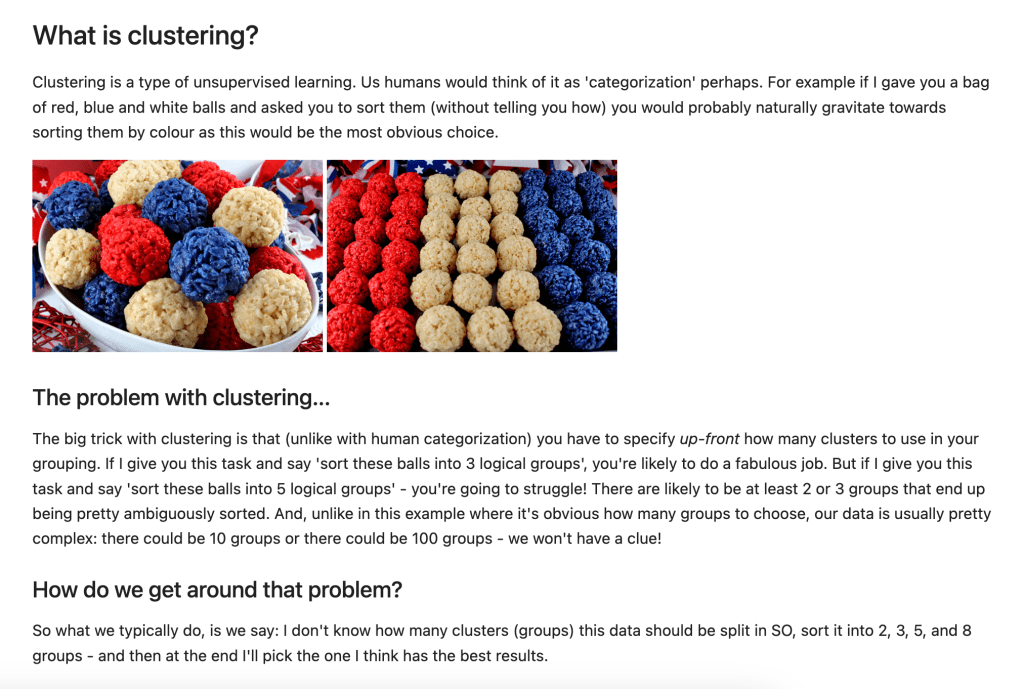

Clustering is a type of unsupervised learning. Us humans would think of it as ‘categorization’ perhaps. For example, if I gave you a bag of red, blue and white balls and asked you to sort them (without telling you how) you would probably naturally gravitate towards sorting them by colour as this would be the most obvious choice.

The problem with clustering…

The big trick with clustering is that (unlike with human categorization) you have to specify up-front how many clusters to use in your grouping. If I give you this task and say ‘sort these balls into 3 logical groups’, you’re likely to do a fabulous job. But if I give you this task and say ‘sort these balls into 5 logical groups’ – you’re going to struggle! There are likely to be at least 2 or 3 groups that end up being pretty ambiguously sorted. And, unlike in this example, where it’s obvious how many groups to choose, our data is usually pretty complex: there could be 10 groups or there could be 100 groups – we often won’t have a clue!

How do we get around that problem?

So what we typically do, is we say: I don’t know how many clusters (groups) this data should be split in SO, sort it into say 2, 3, 5, and 8 groups – and then at the end, I’ll pick the one I think has the best results.

How do we pick the best number of groups?

The elbow method is commonly used to evaluate which is the optimum number of clusters to choose, however, the silhouette method can sometimes give a clearer indication.

Notebook tutorial

I always find the best way to understand something is to see it in action. So in the notebook on my Github repo, I’ve put together an example from the world of NLP: we’ve got a bunch of words, and we want to group them according to their similarities:

- Car stuff: ‘car’, ‘carburettor’, ‘engine’, ‘wheel’, ‘steering’, ‘gear’

- Computer stuff: ‘computer’, ’email’, ‘screen’, ‘keyboard’, ‘programming’

- Animal stuff: ‘dog’, ‘cat’, ‘cow’, ‘sheep’, ‘chicken’, ‘pig’

- Utensils stuff: ‘knife’, ‘fork’, ‘spoon’, ‘plate’, ‘bowl’, ‘cup’

- Building stuff: ‘house’, ‘building’, ‘dwelling’, ‘apartment’, ‘accommodation’

Ideally, our model should put all car stuff in the same group, all computer stuff in another group and so on – without us having given it any indication of these groupings upfront – magic! In this toy example, we already know how the words should be clustered, so we’ll be able to evaluate the metrics and our actual results against the ground truth and see how it all works quite clearly! You can see the results in the notebook here: